SAS Syntax and Theme

A Sublime Text 3 package for SAS syntax highlighting and the corresponding SAS Theme

Details

Installs

- Total 18K

- Win 13K

- Mac 4K

- Linux 2K

| Jun 10 | Jun 9 | Jun 8 | Jun 7 | Jun 6 | Jun 5 | Jun 4 | Jun 3 | Jun 2 | Jun 1 | May 31 | May 30 | May 29 | May 28 | May 27 | May 26 | May 25 | May 24 | May 23 | May 22 | May 21 | May 20 | May 19 | May 18 | May 17 | May 16 | May 15 | May 14 | May 13 | May 12 | May 11 | May 10 | May 9 | May 8 | May 7 | May 6 | May 5 | May 4 | May 3 | May 2 | May 1 | Apr 30 | Apr 29 | Apr 28 | Apr 27 | Apr 26 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Windows | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 3 | 0 | 1 | 1 | 2 | 0 |

| Mac | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 2 |

| Linux | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

Readme

- Source

- raw.githubusercontent.com

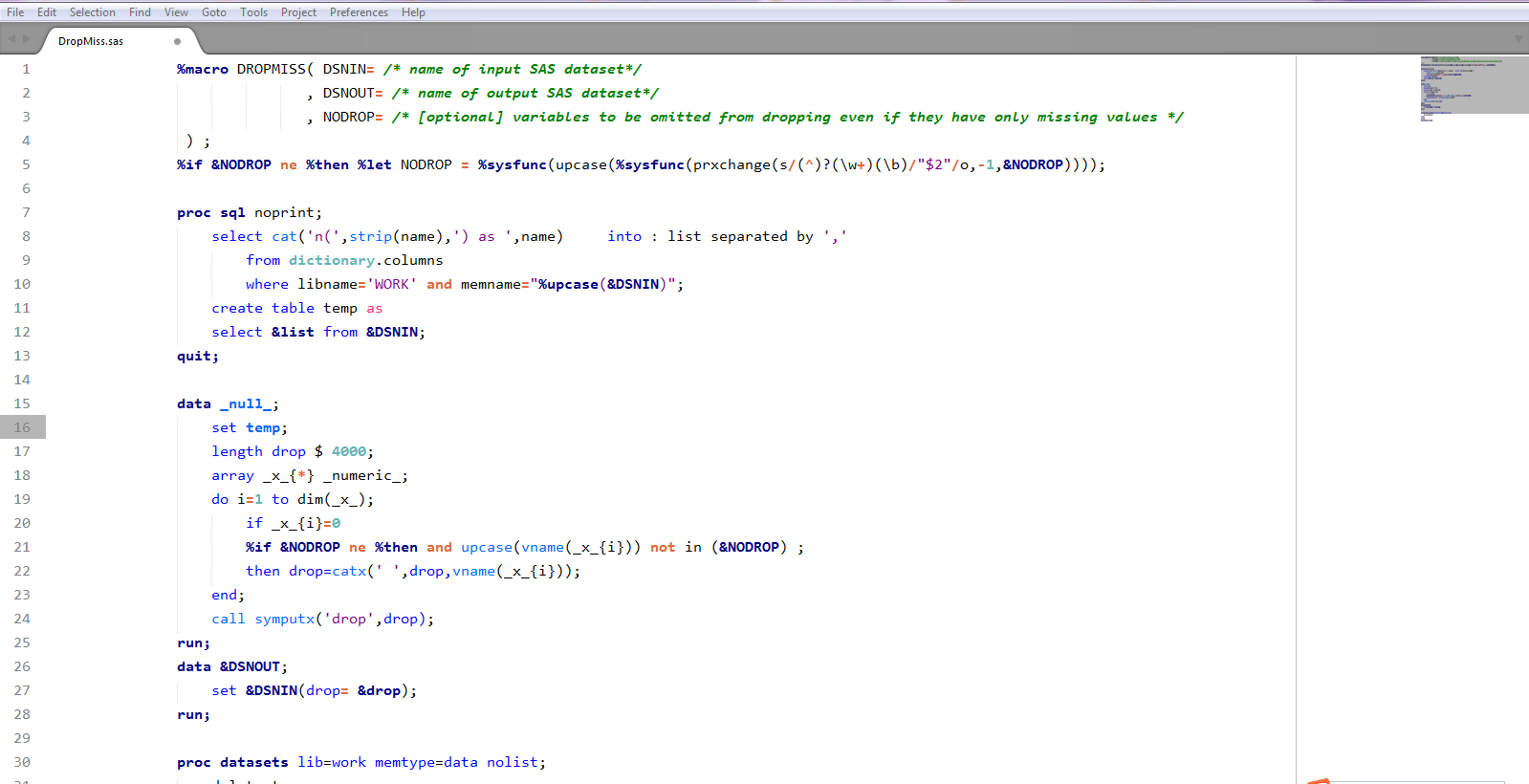

SAS Syntax Highlight and Theme Package for Sublime Text 3

What is this?

A Sublime Text package for SAS syntax highlight and color scheme which mimic the SAS system.

How to Install

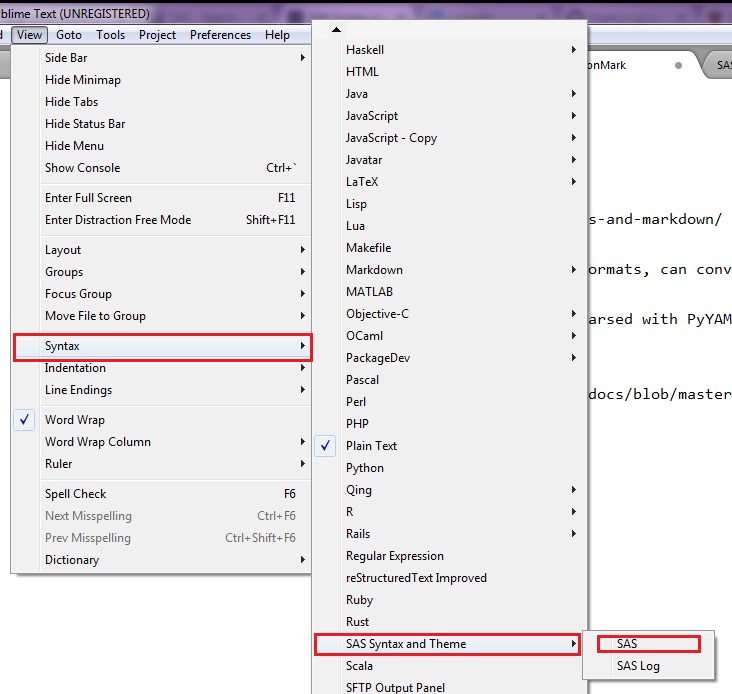

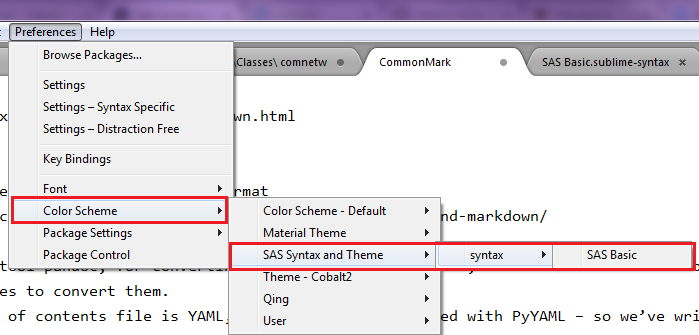

Via Package Control

The easiest way to install is using Sublime Package Control, where the package is listed as SAS-Syntax-and-Theme.

- Open Command Palette using menu item

Tools -> Command Palette...(⇧⌘P on Mac) - Choose

Package Control: Install Package - Find

SAS Syntaxand hit Enter

Manual

You can also install the theme manually:

- Download the .zip

- Unzip and rename the folder to

SAS-Syntax-and-Theme - Copy the folder into

Packagesdirectory, which you can find using the menu itemSublime Text -> Preferences -> Browse Packages...

## Thanks

The SAS Programming Package developed by

## Thanks

The SAS Programming Package developed by